Dance Dance Revolution and the need for Alternate Human Computer Interaction

Z and I went over to my buddy John's yesterday to borrow his XBox360 copy of Dance Dance Revolution. I've been avoiding this game for nearly ten years. Partially because I can actually dance in real life (my wife can attest to this, and as she's an African, it's high praise, let me tell you) and DDR doesn't seem like dancing to me, but also because I know I'd get too into it.

Z and I went over to my buddy John's yesterday to borrow his XBox360 copy of Dance Dance Revolution. I've been avoiding this game for nearly ten years. Partially because I can actually dance in real life (my wife can attest to this, and as she's an African, it's high praise, let me tell you) and DDR doesn't seem like dancing to me, but also because I know I'd get too into it.

But, John talked me into it and I talked my wife, begrudgingly, into playing it once. We didn't stop until 30 songs later. To quote my wife, "I won't stop until I get an 'A'!" She did, on Rapper's Delight, by the way.

I think that we took to this game for the same reason we took to the Wii - it's so physically interactive. After twenty years of twitch-gaming with a controller and my thumbs, and fifteen years of typing for a living, the last thing I want to do is come home and use a controller.

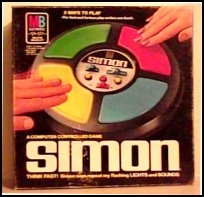

What so funny to me about Dance Dance Revolution to me is that it's just another version of Simon - a frenetic real-time psycho game of Simon set to house music while dancing with much gnashing of teeth - but Simon nonetheless.

Really immersive gaming (computing) requires one of two things. Either...

...a controller that mirrors something in reality...

Guitar Hero, another game I played at John's (loan that to me also, John!), is basically the same thing - it's Dance Dance Revolution with a fret. Addictive, surely, and I'm sure the wife will get a kick out of it, but it's a little too close to typing for these hands.

The original Xbox had an amazing (and VERY expensive) game called Steel Battalion that included a custom controller with over 40 buttons. It was brilliant. We played it at work (at lunch) for months. It was the most immersive gaming experience I'd had short of going to an arcade and getting into one of those $100K flight simulators. Of course, the controller was $200 - as much as an Xbox.

The original Xbox had an amazing (and VERY expensive) game called Steel Battalion that included a custom controller with over 40 buttons. It was brilliant. We played it at work (at lunch) for months. It was the most immersive gaming experience I'd had short of going to an arcade and getting into one of those $100K flight simulators. Of course, the controller was $200 - as much as an Xbox.

We've got to break out of this Mouse and Keyboard rut we're in as a culture (this includes you Quake and Unreal Tournament folks who insist a mouse and keyboard is the Only Way to Play - you're not helping!) and move into the Minority Report Multi-Touch Interaction world that folks like Jeff Han are pioneering.

...or intuiting intent via hand gestures

Now, $30k for something like Jeff Han's solution is (currently) untenable, but surely with all these Web Cams along with the brilliance of folks like Ashish and his Gesture Recognition stuff or the amazing uMouse stuff that Larry Lart (seriously, rush over there, now and read what he's doing) is working on (Hopefully I'll get a review of his stuff and he'll get a download link up soon. He said it'll be in beta the next week or so.) should give us some reliable moving of windows using our hands.

I figure it can't be that hard to watch a hand with a webcam, and that combined with the fact that there's only so many windows at a time on the screen - that lowers/narrows the number of things you'd want to do. Fine control of the mouse via gestures is a start, but a REALLY compelling solution would augment the mouse by using the web cam to track your hands and allow the push windows from monitor to monitor in a multimon scenario, minimize windows, launch Google, etc.

I figure it can't be that hard to watch a hand with a webcam, and that combined with the fact that there's only so many windows at a time on the screen - that lowers/narrows the number of things you'd want to do. Fine control of the mouse via gestures is a start, but a REALLY compelling solution would augment the mouse by using the web cam to track your hands and allow the push windows from monitor to monitor in a multimon scenario, minimize windows, launch Google, etc.

Certainly we could make it even easier by putting things on our hands (video of Atlas Gloves and Google Earth) and making the functionality very specialized.

Frankly I'm surprised that BillG would put so much work into Voice Recognition and Tablet but miss out on the opportunity to revolutionize User eXperience via a simple webcam. I don't need my webcam or computer to tell me if I'm sad - I'd like it to recognize my intent and act on it.

There's lots of Alternative Pointing Devices to choose from, but they all fall into the same tired metaphors. As mice go, personally I really like the Evoluent Vertical Mouse (it doesn't turn your hand unnaturally) and I also have Tablets for all my computers. I love my Ergodex, but I don't use it for coding like I used to. I'm convinced that gestures are the next big thing - and no, I don't mean mouse gestures. It's a huge shame that the Fingerworks guys went out of business. Their pad was brilliant. I know Rory is a huge fan of their iGesture pad. It was just too expensive. All these mechanisms are tired shadows of what a good gesture system could do.

It's a huge shame that the Fingerworks guys went out of business. Their pad was brilliant. I know Rory is a huge fan of their iGesture pad. It was just too expensive. All these mechanisms are tired shadows of what a good gesture system could do.

Making cheap webcams recognize our hand gestures - even if I have to point the camera at my hand on the mouse - has huge potential. This is a 100% solvable software problem.

Of course, the real tragedy in all this? I'll never dance (or, "Dance Dance" rather) as well as this five-year-old.

About Scott

Scott Hanselman is a former professor, former Chief Architect in finance, now speaker, consultant, father, diabetic, and Microsoft employee. He is a failed stand-up comic, a cornrower, and a book author.

About Newsletter

Mouse and hands are the old school - I'd like to control everything with my eyes: http://eyesclosed.org/showreel.html

Can you give us an update on your RSI injuries? How are your hands and back these days, after using these alternative input devices? Have any treatments helped?

Peter

(who also has sore hands and a sore back)

Comments are closed.

I strongly agree with you that the mouse / screen interaction is limiting us humans in moving forward in HCI and that recongizing gestures is the next logical step. I disagree, though, that mouse gestures are not a reasonable first step.

Before I was involved in software engineering, I was involved in electrical engineering and was heavily involved in CAD/CAM design. I clearly remember a very expensive CAD/CAM application that ran under UNIX that recognized gestures input via the mouse. As I recall, the gestures were recognized by moving the mouse without the need to click the button. Just make the gesture and you can perform functions like zoom in/out, pan, select, delete. The interface was extremely functional interface over 10-15 years ago and I have not seen anything approaching this yet.

A good expample where this could be applied is a product that I heard about which is Tablet UML. This seems like a really effective tool that allows for entering and editing UML diagrams via a tablet PC. It would seem to me that all of the functionality could be reproduced on a non-Tablet PC if only the application would recognize plain mouse movements rather than the Tablet PC ink gestures. We know that there are very few real Tablet PC's being sold. While it is true that you can install Tablet PC edition on a standard PC, but we know that there are fewer of these. If an application like this were able to read the mouse directly, the application functionality could be opened up to a much wider audience.

I guess my hang up is that I don't want to have N different input devices just because device (a) is required for application (a) etc. I would like to be able to use well known input devices in better ways. I would limit this list to keyboard, mouse, plain drawing tablet, microphone and camera. Just my two cents.

Jim