Is there a good reason to mark a class public?

Paul Stovell was watching a talk I gave with Keith Pleas at Teched US 2006 on building your own Enterprise Framework. The basic jist was that architecting/designing/building a framework for other developers is a different task than coding for end users.

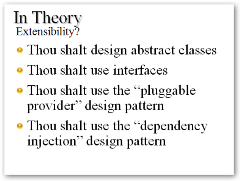

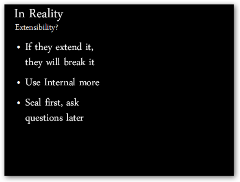

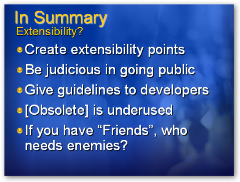

One thing that is valuable for context is that Keith and I were playing roles in this presentation. Keith was playing Einstein in his Ivory Tower, the developer who wants perfect purity and follows all the rules. I was playing Mort the realist, the developer who just wants to get the job done. We went back and forth with white slides for Keith, Black for me, each of us declaring the extreme view, then coming together on the final slide with some pragmatic and prescriptive guidance.

Paul had an issue with the slide on Extensibility where I, as the hyper-realist, said:

- If they extend it, they will break it

- Use Internal more

- Seal first, ask questions later

He said:

Frankly, I think this is crap.

[Paul Stovell]

For goodness' sake, Paul, don't sugarcoat it, tell me how you really feel! ;) Just kidding. He has some interesting observations and (some) valid points.

If you are developing a framework or API for someone else to use, and you think you know more about how they plan to use your API than they do, you've got balls. [Paul]

I mostly agree with this. However, you certainly need to have SOME idea of what they are using it for as you're on the hook to support it in every funky way they might used it. It is reasonable to have some general parameters for how your API should be used. If you design it poorly, it will likely get used in ways that may end up giving the developer a bad experience or even breaking the app.

For example, in a logging service we had a method called ConfigureAndWatch that mirrored the log4net ConfigureAndWatch. It's meant to be called once per AppDomain, and never again. Because it was not only poorly named (since we too the internal implementation's name) and it didn't offer any suggestions (via exceptions, return values, or logging of its own) some users would call it on every page view within ASP.NET, causing a serious performance problem. There's a number of ways this problem could be solved, but the point is that there needs to be a boundary for the context in which an API is used. If we had constrained this more - and by doing that, we think we know more than they do - then some problems would have been avoided.

Scott goes on to give an example whereby he actually made every class "internal" in his API, and waited for users to tell him what classes they wanted to extend, and extended them one by one. [Paul]

This little bit of inspired brilliance was not my idea, but rather Brian Windheim's, an architect at Corillian. We had an application that consisted largely of base classes and developers were insisting that they needed infinite flexibility. We heard "infinite" from the developers, but not the business owner. Brian theorized that they didn't need as much extensibility as they thought, and shipped a internally basically sealed version. When folks needed something marked virtual, they put it in a queue. The next internal version shipped with something like 7 methods in one class marked virtual - meeting the needs of all - when originally the developers thought they wanted over 50 points of extensibility.

The point of Brian's exercise was to find a balance between extensibility, both explicit and implicit, and supportability.

When you mark something virtual or make a class public, as a developer framework designer explicitly expressing support for the use of that API. If you choose to mark everything virtual and everything public as Paul advocates, be aware of not only the message you send to the downstream developer, but also the unspeakably large combinatorics involved when that developer starts using the API in an expected way.

Cyclomatic complexity can give you a number that expresses the complexity of a method and offer valuable warnings when something is more complex than the human mind can comfortably hold. There are other tools (like NDepend and Afferent Coupling, Lattix and it's Dependency Structure Matrices and Libcheck and its measure of the churn of the public surface area of a framework) that can help you express the ramifications of your design decisions in fairly hard metrics and good reporting.

If you mark all your classes and methods public, be informed of these metrics (and others) and the computer science behind them and acknowledge that you're saying they aren't right for you. Just be aware and educated of the potential consequences, be those consequences bad or good.

Can you honestly rely on people who are "just playing" with a technology to tell you which bits they will need to be extensible 12 months into the future?

You totally can't. When you're designing for Users, you do a usability study. When you're designing for Developers, you need do a a developability study.

Microsoft actually does more of this than most folks think. Sure there's the Alphas, Betas and CTPs, but there's also TAP (Technology Adoption Programs) programs, Deep Dives where folks go to labs at Microsoft and work on new technology and frameworks for a week while folks take notes. These programs aren't for RDs or MVPs, they're for developer houses. If you're interesting, ask your local Microsoft rep (whoever organizes your local Nerd Dinners perhaps) how you can get into an Early Adopter Program for whatever technology you're hoping to influence. They really DO listen. We just came back from a Deep Dive into PowerShell and got not only access to the team but a chance to tell them how we use the product and the direction we'd like to see it go.

Scotts [sic] philosophy, and that of many people at Microsoft (and many component vendors - Infragistics being another great example), seems to be to mark everything as internal unless someone gives them a reason to make it public.

That's not my philosophy, and I didn't say it was in the presentation. It was part of the schtick. The slides looked like this with Keith as Ivory Tower Guy first, then Me as Realist guy, and the "in actuality" slide last with guidance we could all agree on. However, I still think that marking stuff internal while you're in your design phase is a great gimmick to better understand your user and help balance good design with the important business issue of a supportable code base.

The salient point in the whole talk is be aware of the consequences of extremes and make the decision that's right for you and your company. (Very ComputerZen, eh?)

Paul's right that it is frustrating to see internal classes that do just what you want, but simply marking them public en masse isn't the answer, nor is marking everything internal.

About Scott

Scott Hanselman is a former professor, former Chief Architect in finance, now speaker, consultant, father, diabetic, and Microsoft employee. He is a failed stand-up comic, a cornrower, and a book author.

About Newsletter

I actually like the idea of internalising everything and letting the consumers tell you what they want access to - as long as you can be rapidly reactive to a certain degree to open up those methods that ARE validly needed by consumers.

If you don't want your average developer to use it you should simply mark it with

[EditorBrowsableAttribute(EditorBrowsableState.Never)] so it does not appear in the IntelliSense.

A developer that "realy" wants to call that will be able to call it. Your average Joe won't do it anyway ;)

Corneliu.

I really enjoyed reading your reply. When I watched the presentation and heard your slide, I was a little distracted by all these thoughts about making things internal that I didn't pay much attention to the next couple of slides - I didn't realise that you were playing "bad cop" on that slide. While I used your presentation as an introduction to my post, my issue wasn't really with your presentation, but with all of the frameworks and libraries in the past that have felt so "closed in".

After you highlighted a couple of things in my post I went back and re-read them, and in hindsight I think some of them sounded pretty harsh - but you can't expect much more from a country founded by convicts :) I hope you know I didn't mean any offence by them.

I liked your final paragraph - that there's a good balance you need to strike between making an API that people can really take advantage of, while making something that you can actually support into the future.

I still think there's other ways to enable good developers to make use of deeper parts of your framework that you didn't expect them to, without the implicit guarantees of support into the future that developers seem to expect from a public API. Maybe some kind of new keyword is needed - "public UseItAtYourOwnRisk class Paul { }" perhaps? :)

From this point, it would now become an issue of "this class was not designed to be used/overriden by external entities, but feel free to ignore at your own risk".

It works, and we haven't had any support nightmares from folks breaking it. Just developers who stretch the grid's ability beyond what our support staff can help them with, requiring some deeper help by the grid's original developers. In the end, we don't regret at all not sealing a lot of the classes, it is a decent selling point.

Remvove the guesswork. Talk to your customer!

Normally, frameworks are quite specific in what they do and what they provide. If a whole 'chunk' (class) is required to be made public, then it may be the case that this functionality isn't in the realm of what the Framework was designed to offer. For example, should a class that lists the zipped files in a directory be exposed from a compression framework? Or, should a regular expression helper class be exposed from a logging/tracing framework?

...

More at http://stevedunns.blogspot.com/2006/10/what-to-expose-in-framework.html

Cheers,

Steve

public override unsupported void Foo(); // overriding a non-virtual method

Widget x = new Widget();

x.$InternalHelper(); // call a non-public method on an object

This gives you the best of both worlds. API designers can still direct the usage, and make explicit what they will support. Developers can still stray from the path when they feel it is absolutely necessary, and acknowledge the consequences.

So, my argument against yours is NOT that things should be public/private, rather what should be sealed (rarely, except low-level "ValueType-like" classes), and what should be public versus protected (NOT private).

You could accomplish the same effect using an attribute like "UnsupportedAttribute"

Comments are closed.

this 'you need do a a developability study' concept is like jeffrey snover's discussion of using commandlet's as the public facing api and otherwise keeping classes private.

jeffrey's insistence on 'technology that matters is technology that yields an economic advantage' can act as a good yard stick, focusing on "what practical and beneficial uses can these classes have?" (rather just the superset of all possible uses) -- although this line of thinking probably also gets a bit vague and philosophical in practice.