Accidental Prescience and the Secrets of Project Natal

I can't remember which episode, but a few years ago I mentioned on my podcast that I didn't understand why companies were spending so much time with touch screens and multi-touch input devices when we all have a perfectly good input device staring at us, unused, everyday - our webcams. Minority Report was not only a great movie, but a great user experience idea.

Johnny Chung Lee (I thought he and I had a bromance going, but it's just a fauxmance. It's one way, sniff, he doesn't know I'm alive! ;) did some amazing work in this space using the Wii remote a while back.

Ever since I saw Minority Report, perhaps even before since it's such an obvious idea, I've been searching and trying to figure out when and how this is going to happen. From my point of view, there's just no reason I shouldn't be able to make a small gesture and push a window over to another monitor. Swipe down in the air, minimize. It if was reliable, it'd be a perfect and elegant addition to the mouse and keyboard.

Johnny now works for Microsoft, and recently we learned that he's been working with the team that is doing Project Natal. If you've been under a virtual rock, here's a video what Natal does. Basically it tracks your body and you become the game controller. If it works, it'll be epic. If it fails, it'll be sad. The real question is WHEN. My bet is Christmas, only because it's obvious.

From Johnny's Blog:

The 3D sensor itself is a pretty incredible piece of equipment providing detailed 3D information about the environment similar to very expensive laser range finding systems but at a tiny fraction of the cost. Depth cameras provide you with a point cloud of the surface of objects that is fairly insensitive to various lighting conditions allowing you to do things that are simply impossible with a normal camera.

But once you have the 3D information, you then have to interpret that cloud of points as "people". This is where the researcher jaws stay dropped. The human tracking algorithms that the teams have developed are well ahead of the state of the art in computer vision in this domain. The sophistication and performance of the algorithms rival or exceed anything that I've seen in academic research, never mind a consumer product. At times, working on this project has felt like a miniature “Manhattan project” with developers and researchers from around the world coming together to make this happen.

Before the world (or I) had ever heard of Project Natal, I pounced on interviewed Johnny at Mix 09 in Las Vegas. Recently Raleigh Buckner mentioned on Twitter that there was a lot "said without actually saying" in that interview, and darn it, he's right. I asked the right questions, and Johnny answered, but we (the collective) didn't see!

Now, go watch the interview again, this time with the knowledge of Project Natal's existence...

Now, go watch the interview again, this time with the knowledge of Project Natal's existence...

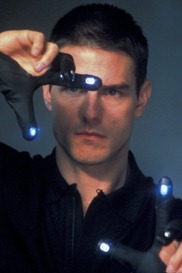

Johnny Lee on Computer Vision Wow. I just bumped into Johnny Lee in the halls here at Mix09. I'm a huge fanboi with a man-crush on this dude. You've seen Johnny before on Channel 9 talking to Robert Hess. Johnny's a legend (in my mind) in the computer vision space, and he put up with me gushing at him here at Mix09. We chatted in the hall about computer vision, what he's working on, how he got the gig at Microsoft and where he sees the future of human-computer-interaction.

Crazy stuff. I'm very excited to see how far they can take this.

About Scott

Scott Hanselman is a former professor, former Chief Architect in finance, now speaker, consultant, father, diabetic, and Microsoft employee. He is a failed stand-up comic, a cornrower, and a book author.

About Newsletter

Sneeze, though, and you might blow away your entire desktop :/

Lovely stuff....

It is ashamed that you and others at Microsoft cannot at least be informed of these new initiatives well in advance to bring this stuff together in a formal manner. Or maybe, you were supposed to know, but you missed that "special meeting" in Building XX. Especially to interview Johnny Lee and he has to do the dance to be careful what he says. And you know, he's wondering what Scott may already know and who may have leaked something to him.

Anyway, I wanted to share with you two blogposts... First one is not very interesting (talking about Nathan & Johnny), however you can find some useful information in second email - especially link to company that was bought recently by Microsoft and developed very similar solution :)

http://martinzugec.blogspot.com/2009/06/interesting-information-about-project.html

http://martinzugec.blogspot.com/2009/06/project-natal.html

So I think it will be cool but geek-country only.

Daniel, agreed...except when it comes to Mobile vs. iPhone. The iPhone is just now getting to where my PPC was four years ago. In the Mobile market, MS is losing the PR war, not the RTM war.

ciao, D

microsoft has said natal is pretty far away, alluding to a 2010 release. but i dont know.. shane kim mentioed in an interview that MSR have been working on the algorithms in natal for several years and that "the team was looking for some hardware to put them into" and that was where the 3dv zcam came in.. that deal was actually setup late last year and locked some time in march i think.

also, since they have devkits and a fairly large amout of them too, i belive they are in the process of designing the device hardware wise (pcb layout and packaging and stuff)

kotaku reported that the natal in the secret e3 demo looked diffrent from the posters, more in the size of a projector.. there are also several reports of a laptop connected to natal. i personally belive that the embedded processor that is going to be in natal isnt done yet. its likely that the e3 natal software ran on the laptop, not on the natal it self.

very very interesting stuff.. scanning twitter for news is good but we really need more tech demos and from microsoft :) and a windows driver ;)

Comments are closed.